Ye old timey IoT, what was it anyway and does it have an upgrade path?

Previously on the internet

In the beginning the Universe was created.

This has made a lot of people very angry and been widely regarded as a bad move.— Douglas Adams in his book “The restaurant at the end of the universe”

Then someone had another great idea to create computers, the Internet and the world wide web, and ever since then its been a constant stream of all kinds of multimedia content that one might enjoy as a kind of remittance for the previous blunders by the universe. (As these things, usually, go some people have regarded these as bad moves as well.)

Measuring the world

Some, however, enjoy a completely different type of content. I am talking about data, of course. This need for understanding and measuring the world around us has been with us ever since the dawn of mankind, but interconnected worldwide network combined with cheaper and better automation accelerated our efforts massively.

Previously you had to trek to the ends of the earth, usually accompanied with great financial and bodily risk, to try and set up test equipment or to monitor them with your senses and write down the readings to a piece of paper. But then, suddenly, electronic sensors and other measurement apparatus could be combined with a computer to collect data on-site and warehouse it. (Of course, back then we called warehouse of data a “database” or a “network drive” and had none of this new age poppycock terminology.)

Things were great; No need any longer to put your snowshoes on and risk being eaten by a vicious polar bear when you could just comfortably sit on your chair next to a desk with a brand new IBM PS/2 on it and check the measurements through this latest invention called Mosaic web browser or a VT100 terminal if your department was really old-school. (Oh, those were the days.)

These prototypes of IoT devices were very specialized pieces of hardware for very special use cases for scientists and other rather special types of folk and no Internet-connected washing machines were on sight, yet. (Oh, in hindsight ignorance is bliss. Is it not?)

The rise of the acronym

First, they used Dual-tone Pulse Modulation or DTMF. You put your phone next to the device, pushed a button on it and the thing would scream an ear-shattering series of audible pulses to your phone which then relayed them into a computer somewhere. Later, if you were lucky, a repairman would come over and completely disregard the self-diagnostic report your washing machine had just sent over the telephone lines and usually either fixed the damn thing or made the issue even worse while cursing computers all the way to hell. (Plumbers make bad IT support personnel and vice versa.)

From landlines to wireless

So because of this, and many other reasons, someone had a great idea to network devices like these directly to your Internet connection and cut the middle man, your phone, off the equation altogether. This made things simpler for everyone. (Except for the poor plumber who still continued to disregard the self-diagnostic reports.) And everything was great for a while again until, one day, we woke up and there was a legion of decades-old washing machines, tv’s, temperature sensors, cameras, refrigerators, ice boxes, video recorders, toothbrushes and plethora of other “smart” devices connected to the Internet.

Internet Of Things, or IoT for short, describes these devices as a whole and the phenomenon, the age, that created them.

Suddenly it was no longer just a set of specialized hardware for special people that had connected smart devices collecting data. Now it was for everybody. (This has, yet again, been regarded as a bad move.) We have to look past the obvious security concerns that this heat of connecting every single (useless) thing to the Internet has created, but we can also see the benefit. The data flows, and the data is the new oil as the saying goes.

And there is a lot of data

The volume of data collected with all these IoT devices is staggering and therefore just simple daily old-timey FTP transfers of data to the central server are no longer a viable way of collecting it. We have come up with different new protocols like REST, Websockets, and MQTT to ingest real-time streams of new data points to our databases from all of these data-collecting devices.

Eventually, all backend systems were migrated or converted into data warehouses that were only accepting data with these new protocols and therefore were fundamentally incompatible with the old IoT devices.

What to do? Obsolete and replace them all or is there something that can be done to extend the lifespan of those devices and keep them useful?

The upgrade path, a personal journey

As an example of an upgrade path, I shall share a personal journey on which I embarked in the late 1990s. At this point in time, this is a macabre exercise in fighting against the inevitable obsoletion, but I have devoted tears, sweat, and countless hours over the years to keep these systems alive and today’s example is no different. The service in question runs on a minimal budget and with volunteer effort; So heavy doses of ingenuity are required.

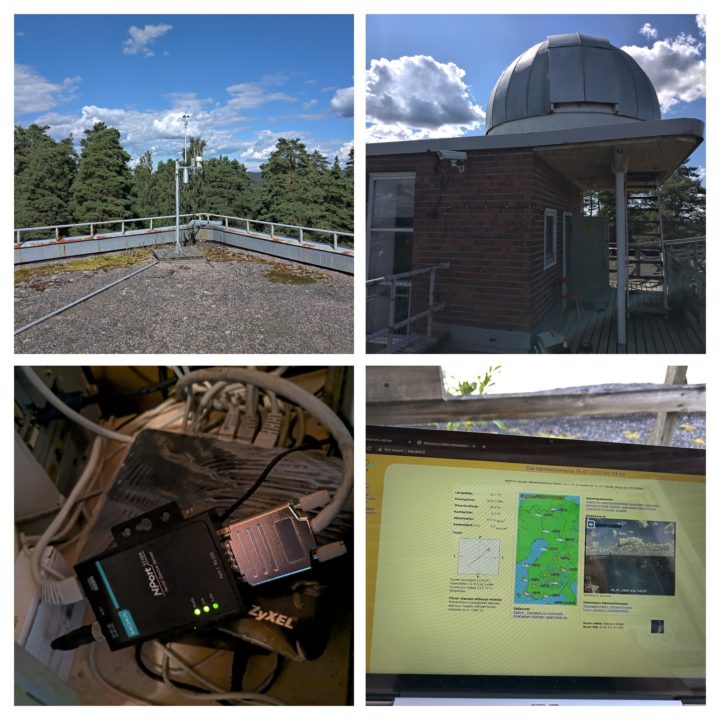

Even though Finland is located near or in the arctic circle there are no polar bears around, except in a zoo. Setting up a Vaisala weather station is not something that will cause a furry meat grinder to release your soul from your mortal coil, no, it is actually quite safe. Due to a few circumstances and happy accidents, it is just what I ended up doing two decades ago when setting up a local weather station service in the city of Hämeenlinna. The antiquated 90’s web page design is one of those things I look forward to updating at some point, but today we are talking about what goes on in the background. We talk about data collection.

The old, the garbage and the obsolete

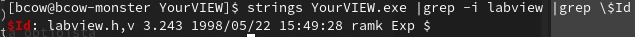

Here, we have the type of equipment that measures and logs data points about the weather conditions at a steady pace. Measurements, which are then read out by specialized software on a computer placed next to it since the communication is just plain old ASCII over a serial connection. The software is old. I mean really old. Actually I am pretty sure that some of you reading this were not even born back in 1998:

This creates a few problematic dependencies; Problems that tend to get bigger with passing time.

The first issue is an obvious one: Old and unsupported version of Windows operating system. No new security patches or software drivers are available which in any IT scenario are a huge problem, but still a common one in any aging IoT solution.

The second problem is: No new hardware is available. No operating system support means no new drivers mean no new hardware if the old one brakes down. After spending a decade to scavenge this and that piece of obsolete computer hardware to pull together a somewhat functioning PC is a quite daunting task that keeps on getting harder every year. People tend to just dispose of their old PCs when buying a new one. The halflife of old PC “obtanium” is really short.

Third challenge: One can’t get rid of the Windows 2000 even if one wanted to since the logging software does not run on anything newer than that; And, yes, I tried even black magic, voodoo sacrifices and Wine under Linux to no avail.

And finally, the data collection itself is a problem: How do you modernize something that uses its own data collection /logging software and integrate it with modern cloud services when said software was conceived before modern cloud computing even existed?

Path step 1, an intermediate solution

As with any problem of technical nature after investigating the problem yields several solutions, but most of them are infeasible for a reason or another. In my example case, I came up with a partial solution that later enables me to continue building on top of it. At its core this is a cloud journey, an cloud migration, not much different from those I daily work with our customers at Solita.

For the first problem, Windows updates, we really can’t do anything about without updating the Windows operating system to more recent and supported release, but unfortunately, the data logging software won’t run anything newer than Windows 2000; Lift and shift it is then. The solution is to virtualize the server and bolster the security around the vulnerable underbelly of the system with firewalls and other security tools. This has the added benefit of improving service SLA due to lack of server/workstation hardware failures, network, and power outages. However, since the weather station communicates over a serial connection (RS232) we need to also somehow virtualize the added physical distance away. There are many solutions, but I chose a Moxa NPort 5110A serial server for this project. Combined with an Internet router capable of creating a secure IPSec tunnel between the cloud & on-site and by using Moxa’s Windows RealCOM drivers one can virtualize the on-site serial port to the remote Windows 2000 virtual server securely.

How about modernizing the data collection then? Luckily YourVIEW writes the received data points into CSV file so it is possible to write secondary logger with Python to collect those data points directly to a remote MySQL server as they become available.

Path step 2, next steps

What was before a vulnerable and obsolete piece of scavenged parts is still a pile of obsolete junk, but now it has a way forward. Many would have discarded it is garbage and thrown this data collection platform away, but with this example, I hope to demonstrate that everything has a migration path and with proper lifecycle management your IoT infrastructure investment does not necessarily need to be only a three-year plan, but one can expect to gain returns for even decades.

An effort on my part is ongoing to replace the MyView software all together with a homebrew logger that runs in a Docker container and published data with MQTT to the Google Cloud Platform IoT Core. IoT Core together with Google Cloud Pub/Sub assembles an unbeatable data ingestion framework. Data can be stored into, but not limited to, Google Cloud SQL and/or exported to BigQuery for additional data warehousing and finally for visualization for example in Google Data Studio.

Even though I use the term “logger” here the term “gateway” would be suitable as well. Old systems require interpretation and translation to be able to talk to modern cloud services. Either commercial solution exists from the vendor of the hardware or in my case I have to write one.

Together we are stronger

I would like to think that my very specific example above is unique, but I am afraid that is not. In principle, all integration and cloud migration journeys have their unique challenges.

Luckily modern partners, like Solita, with extensive expertise in cloud platforms like the Google Cloud Platform, Amazon Web Services or Microsoft Azure and in software development, integration, and data analytics can help a customer to tackle these obstacles. Together we can modernize and integrate existing data collection infrastructures for example in the web, healthcare, banking, at the factory floor, or in logistics. Throwing existing hardware or software into the trash and replacing them with new ones is time-consuming, expensive, and sometimes easier said than done. Therefore carefully planning an upgrade path with a knowledgeable partner might be a better way forward.

Even when considering investing in a completely new solution for data collection a need for integration is usually a requirement at some stage of the implementation and Solita together with our extensive partner network is here to help you.